Don’t Buy the AI Hype — Buy the Honest Conversation

Sit through enough software demos and a pattern starts to emerge. Somewhere between the slide on streamlined workflows and the one about real-time visibility, the presenter leans forward and drops the phrase: AI-powered. The room nods. Someone scribbles it down. And the question nobody says out loud is — what does that actually mean?

To be fair, AI is genuinely changing enterprise software. Real progress is happening in how systems learn from data, flag problems early, and cut down on manual grunt work. This isn’t an argument that AI is all smoke and no fire. It’s an argument that not all AI is the same thing — and that mid-market buyers are getting a raw deal when it comes to telling the difference.

The Pressure to Lead with AI

Mid-market ERP and CRM vendors are caught in a tough spot. Enterprise players have poured billions into AI, and their customers are asking the same questions regardless of company size. So “AI-powered” has quietly shifted from being a technical description to a marketing checkbox — something that needs to show up on the website, in the pitch deck, and in the renewal conversation, whether the product genuinely justifies it or not.

This isn’t a dig at any one vendor. It’s the water the whole industry is swimming in right now. When buyers expect AI and competitors are claiming it, stretching the definition becomes hard to resist. The result is a market where “AI-powered” can mean anything from a genuinely sophisticated machine learning model to a rebranded reporting dashboard. Both might be useful. But they’re not the same thing, and they shouldn’t carry the same price tag.

What "AI-Powered" Often Looks Like in Practice at “AI-Powered” Often Looks Like in Practice

A few patterns come up again and again:

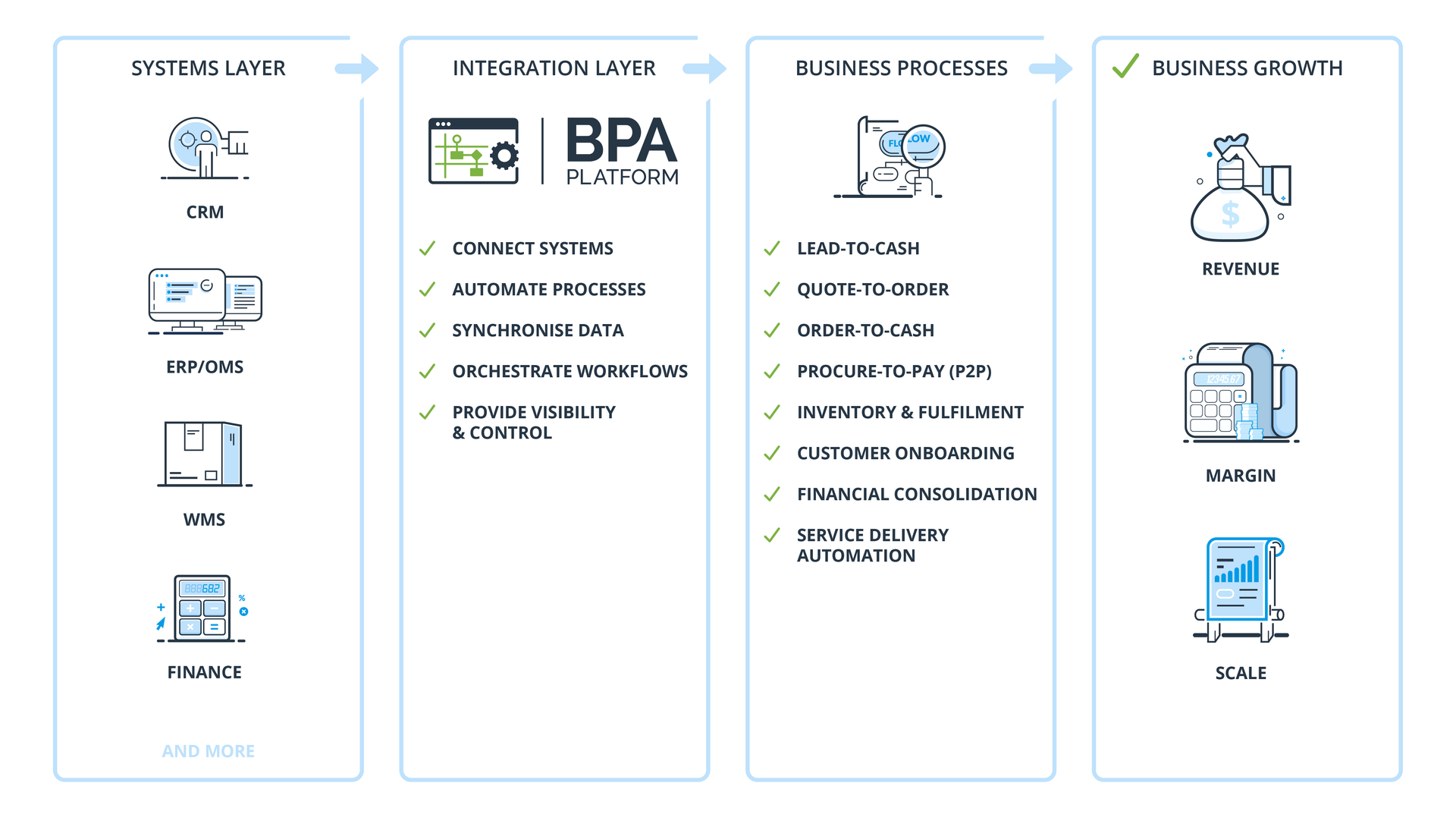

Predictions that are really just history repeating. If a system flags a customer as “at risk” because their order frequency dropped, that’s not a prediction — that’s a report. Useful, sure, but it’s been available for years. Platforms like BPA Platform have delivered exactly this kind of data-driven alerting and exception reporting through straightforward business rules and workflow logic — long before anyone was calling it AI. The capability was always real. The rebrand is what’s new.

Automation dressed up as intelligence. Routing an invoice to the right approver based on a spend threshold. Triggering a follow-up when an order status changes. Escalating a support case that’s been sitting too long. These are rules-based processes — and BPA Platform handles them through its codeless automation engine without needing a machine learning model anywhere near them. They’re reliable, auditable, and they work. When vendors slap an AI label on this kind of automation, it doesn’t make the feature more powerful. It just makes the buying conversation murkier.

Generative AI bolted on rather than built in. The scramble to add large language model features to existing products has produced some genuinely useful results — and some that are basically a chat window glued onto software that hasn’t fundamentally changed underneath. The question worth asking isn’t whether there’s a generative component. It’s whether it’s working from relevant data, wired into actual workflows, and backed by someone who’ll own the problem when it gets something wrong.

A Simple Framework for Evaluating AI ClaimsA Simple Framework for Evaluating AI Claims

You don’t need a data science background to push back on what vendors are telling you. A handful of direct questions will do most of the work:

Whose data is it learning from — ours, or a generic model? A system trained on your business behaves very differently from one drawing on industry-wide averages.

What happens when it gets it wrong? Every AI system makes mistakes. How a vendor answers this question says a lot about how seriously they’ve thought it through.

Who owns it when something breaks or changes? Features tied to third-party models can shift behaviour when those models are updated. That’s a support question, not a technical footnote.

Can we see it running in a live environment? Demo environments are controlled by design. Asking to speak with a reference customer who uses the feature in production is a completely fair request — and the answer tells you a lot.

So, What Should You Actually Do?

What Should You Actually Do?

None of this is a case for tuning out AI conversations entirely. Informed skepticism is different from blanket cynicism. AI is developing fast, and what isn’t quite there yet could look very different in two or three years. The vendors worth watching are the ones building seriously on solid data foundations — and being straight with customers about what’s ready and what isn’t.

The ones worth being cautious about are using AI language mainly to justify price rises, paper over product gaps, or match a competitor’s latest press release.

Your business deserves sharper questions than that. Ask them. The vendors with real answers won’t mind.

------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------

Full disclosure: this blog was written with the help of Claude, Anthropic’s AI assistant. Yes, we’re aware of the irony — a post about not blindly trusting AI was drafted with the help of AI. But that’s rather the point. Used thoughtfully, with a human steering the ideas, challenging the output, and rewriting the bits that sounded like a robot trying to sound like a person, AI can be a genuinely useful tool. It didn’t write this. It helped write this. There’s a difference — and that difference is exactly what this blog is about.